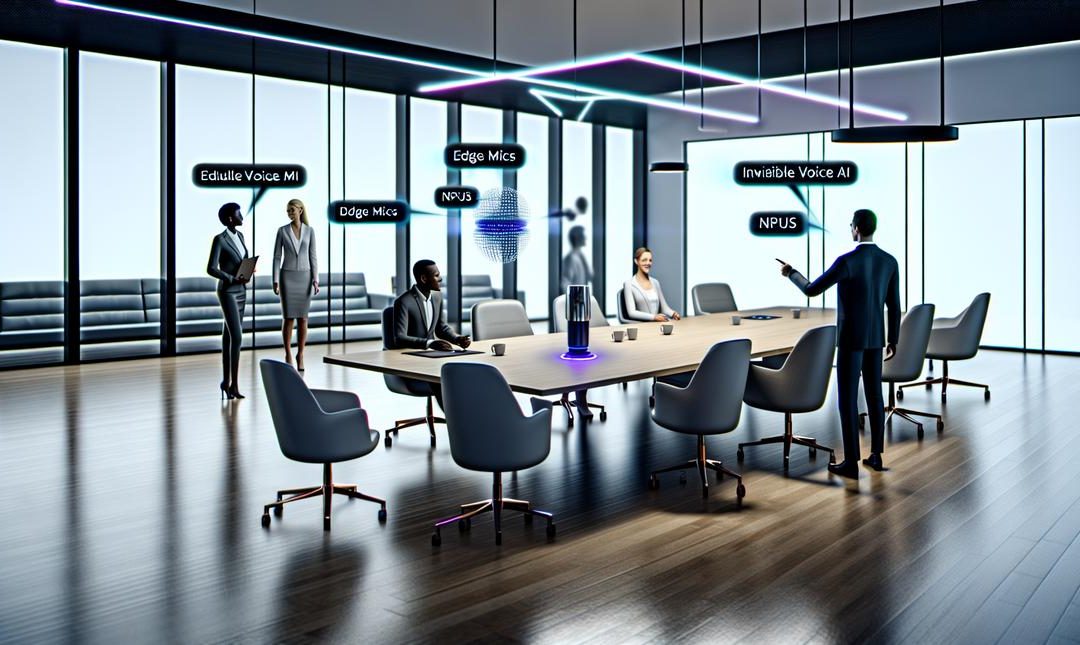

Edge Mics and NPUs are revolutionizing the way we interact with Voice AI, making it seamlessly integrated into business operations. Explore how these powerful tools can cut costs, save time, and streamline processes, while boosting productivity and staying ahead in a competitive market.

Understanding Edge Mics and NPUs

Edge mics capture sound at the source, NPUs process it right beside the user.

Edge mics are not just microphones, they are arrays with beamforming, voice activity detection, acoustic echo cancellation, and wake word spotting baked into tiny DSPs. They focus on the speaker, cut room noise, and remove feedback from speakers so barge in works. I have seen cheap single mics ruin great models, so the front end matters more than most admit.

NPUs specialise in matrix maths. They run compact speech models locally with low power draw, using int8 or even int4 quantisation. That means fast wake word checks, real time transcription, intent detection, and even small TTS loops without a cloud hop. The Intel Core Ultra NPU is a good reference point, though not the only one.

Why this makes voice feel smooth, and invisible. Lower latency, fewer round trips, stronger privacy. It also cuts server bills. I think that matters when usage spikes.

Practical wins

- Faster responses, closer to the 200 ms threshold users feel as instant, see Latency as UX, why 200ms matters for perceived intelligence.

- Stable performance in patchy connectivity, perhaps offline.

- Consistent audio quality across rooms and devices.

- Lower energy per interaction, longer battery life.

There is a trade off, model size and edge power. Yet for most commands and automations, the local stack wins. I have changed my mind on that twice, to be fair.

Voice AI’s Role in Modern Business

Voice AI now sits at the core of daily work.

It removes drudge work and keeps momentum high. Speak a task, see it done. Actions are captured, meetings scheduled, emails drafted, and notes logged into Salesforce while the conversation flows. Because Edge Mics and NPUs run close to the user, it feels instant, private, and steady even on a loud shop floor.

Customer engagement sharpens. People talk in their own words, the system recognises intent, answers clearly, and only calls in a human when the stakes rise. Wait times fall. Replies feel more personal, even if they are templated, which is a bit strange, I know, yet it works.

Insight comes baked in. Every call, huddle, or corridor chat becomes structured signals. You see sentiment shifts, objections, friction points, and next best actions inside the hour, not the quarter.

- Automate admin that drags teams down.

- Lift engagement with context that feels human.

- Surface insights at the point of action.

The productivity lift is real. Teams reclaim hours, first response time drops, and error rates shrink. I have seen double digit gains in handle time, perhaps more in lean squads. Edge Mics cut noise and catch wake words cleanly. NPUs keep latency below the mental blink, so the tech disappears and results show. For playbooks that go deeper, see AI voice assistants for business productivity expert strategies.

The Power of AI Automation Tools

Automation compounds when audio meets the edge.

When you stitch AI automations to Edge Mics and NPUs, routine work simply melts. Meetings auto tag themselves, CRM notes fill in from live cues, and support tickets route by detected intent and urgency. No prompts to chase, it just happens. Zapier can catch the trigger and fan actions across your stack, quietly.

The money part is clear. On device speech models cut cloud calls, which cuts unpredictable bills. NPUs handle wake words, diarisation and redaction locally, so you pay for fewer minutes and fewer GPUs. I have seen contact centres halve speech API spend, then trim call wrap time by several minutes. Small, yes, but relentless. Battery friendly Edge Mics hold the line, which matters on factory floors and sales floors.

NPUs also keep latency tight, often under 200 ms, so teams do not wait. Quantised models run in 4 or 8 bit, staying accurate enough for operations, I think, while keeping power draw tame. That gives managers time back to test new offers and move first.

Practical wins:

– Fewer manual notes, fewer costly errors.

– Real time triggers from tone and keywords.

– On device redaction that keeps legal calm.

For the nuts and bolts, see On-device Whisperers, building private low latency voice AI that works offline. I re read it twice, perhaps too slowly, but the payoff is clear.

Enhancing Creativity and Innovation with AI

Creativity loves speed.

Edge Mics catch ideas the moment they leave your lips, then NPUs shape them on device. No lag, no cloud wait, just a clean stream of drafts, hooks, and angles. It feels invisible, which is the point. I have caught half thoughts while walking to a meeting, and they turned into a full pitch by the lift. Odd, but it works.

Here is where it gets interesting for teams:

- Retail, merchandisers speak shelf notes, the NPU spins ten punchy taglines, store staff pick the winner before lunch.

- Media, producers riff alt intros into an Edge Mic, edits appear in seconds, watch time rises without drama.

- Healthcare, clinicians co-create clear patient summaries by voice, warmer tone, fewer follow-up calls, still private.

- Hospitality, chefs narrate new tasting menus, the model drafts stories that sell tables, not just dishes.

Campaigns get braver too. Teams trial hundreds of micro-variations, live, then keep only what pulls. See how dynamic voice ads, real time creative that talks back can fuel this test-and-learn loop. Perhaps a small lift here, a big swing there. I think the compounding matters.

One hardware note, the NVIDIA Jetson Orin inside a kiosk can run this stack quietly. No fanfare, just output. And from here, the smartest move is obvious, share playbooks and voice styles with a trusted circle. That is where the ideas multiply.

Building a Robust AI-Driven Community

Community makes AI stick.

Edge mics and NPUs fade into the background only when teams trade notes. The hardware is silent, the learning is not. I have seen engineers swap beamforming presets in a hallway chat, then cut false wakes by half the same afternoon. That kind of lift rarely comes from a spec sheet.

You need peers who have tried this in the real world, shops, kitchens, call centres. People who can tell you why diarisation drifts in glass rooms, or why wake words double trigger near espresso machines. It sounds small, yet these are the snags that slow a rollout, perhaps for weeks.

What a strong AI circle gives you

- Peer proof, fast answers to odd bugs, like echo tails and barge in timing.

- Playbooks for consent, redaction, and on device logging that passes audit.

- Fair kit comparisons, mics, chips, middleware, without vendor pressure.

- Hiring leads, procurement checklists, and sample risk clauses.

We host weekly clinics, teardown calls, and a quiet forum where people post configs and post mortems. I think the small, private wins matter more than shiny launches. For bottom up momentum and guardrails that stick, see Shadow IT, but smart, governing bottom-up AI adoption.

One practical note, a single field test on NVIDIA Jetson Orin with your actual noise profile can save a quarter of your budget. Slight contradiction, sometimes the chat in the room saves even more. This community keeps you current, and ready for what comes next.

Future-Proofing Business Operations

Future proofing starts with hardware you control.

Pair Edge Mics with NPUs and your voice stack stops creaking. Local wake words trigger instantly, noise gets stripped at the source, and speech flows into models without a network hop. That single decision removes latency spikes, trims cloud bills, and gives you privacy by default. If you care about call quality and task handoff speed, you should. I have seen hold time melt when sub 200 ms responses become the norm, and nobody asked to roll it back.

The math is simple. NPUs run inference at low power, so you process more requests per watt. Edge Mics clean the signal, so you avoid costly retries and human rework. Combine both and your per interaction cost drops, sometimes fast. Want the deeper logic behind speed and trust, read on device voice AI that works offline. It maps neatly to this.

This is not only about saving. New products appear when latency vanishes. Real time QA on sales calls. Ambient notes in the clinic. On site translation that keeps pace with people. An Intel Core Ultra NPU will do this quietly, perhaps you already have one on a desk.

If you want a plan that fits your kit and targets, **contact Alex**. We will tailor the stack, cut waste, and move faster, without drama.

Final words

Edge Mics and NPUs, when integrated with AI, provide businesses a strategic edge. By streamlining operations and enhancing creativity, these tools drive efficiency and innovation. Businesses can leverage this technology to future-proof operations, cut costs, and boost productivity. For tailored solutions, connect with an expert to optimize your Voice AI implementation today.